Improving Page Speed for Google Rankings

Google announced that it will consider the speed of the web site as part of its search engine ranking (Using site speed in web search ranking) – something I suspect that it has been doing for some time. We have known for a while that page speed is very important to how the user perceives the quality of the web site. You can significantly lower your bounce rate by improving page speed – because users will look at more of your site if the first page they request returns quickly. Now, it is just not user experience that suffers from a slow site; it is site ranking on Google – which means with this announcement slow sites attract fewer viewers because your site will appear father down the search results.

“Now slow performing code and slow database queries are a marketing issue.”

For on of my major web site serving Google AdWords slowness means lower revenue.

Page Caching

On the web site serving AdWords I had some slow leaf pages (outlying lower pages with the majority of the content details), the major entrance pages I had improved a while back using page caching. There are thousands of leaf pages – which meant I couldn’t cache them all because they would be forced from the cache for lack of room. I could see this in Google Webmaster tools, some days I had fast requests and some days slow results – depending if Google’s crawler traversed a cache page.

SQL Server

I knew that my issue was in the response of my SQL Server queries that fetched content to generate my dynamic ASP.NET pages. I set about optimizing my queries by using the procedure cache to figure out which queries where running the slowest

Every query that SQL Server executes is saved in the SQL Server query plan cache, regardless of whether that query is ad-hoc, inline parameterized queries in C# code, stored procedures, or just executed from the SQL Server Management Studio. Along with the query information is how long it took to execute, the number of executions and other statistics about the query.

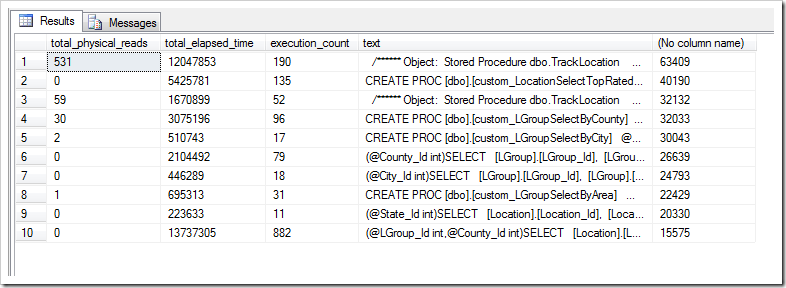

This is the query that I use to get the top ten slowest queries. Slow is defined as slowest average execution time across all executions since SQL Server started. I found the query below on the Internet, however optimized it so it runs faster:

SELECT total_physical_reads, total_elapsed_time, execution_count, [text], total_elapsed_time /execution_count FROM (SELECT TOP 10 * FROM sys.dm_exec_query_stats WHERE execution_count > 100 ORDER BY total_elapsed_time /execution_count DESC) AS qs CROSS APPLY sys.dm_exec_query_plan (qs.plan_handle) CROSS APPLY sys.dm_exec_sql_text(qs.sql_handle) AS st ORDER BY total_elapsed_time /execution_count DESC

I tuned the query so it will only return queries that have run more than 100 times. This excludes custom queries (like this one) from the SQL Management Studio.

The total_elasped_time column is the total amount of time spent running this query across all executions since SQL Server started last.

The execution_count column is the number of times the query has executed on SQL Server since it started last.

Dividing total_elasped_time by execution_count gives me the average time the query took to execute -- I want to go after those queries where this number is high.

The text column contains the query text. It is up to the reader to figure out if this is in a stored procedure, inline C# code, or adhoc.

Summary

On my site I tracked down the offending queries and over two days improved my site speed tremendously by tuning the queries. I rewrote some of them and added some covered indexes. That top spot on Google is so important for great web traffic – getting there would make my tuning worth the effort.

{6230289B-5BEE-409e-932A-2F01FA407A92}

Comments

Post a Comment